Our R&D team has been saying for years that we will eventually be creating virtual content from within the virtual world. Imagine standing in a virtual environment and being able to conjure almost anything, instantly. Thoughts of Star Trek’s Holodeck system and how you could just say it and AI would create it. The computer was able to magically generate an entire world.

Although we have refined our creative processes over the last 12 years, we’ll never be able to match the likes of the Holodeck, or will we? I know it sounds a bit of a far-fetched notion but frankly, things are coming together to facilitate some form of this very soon.

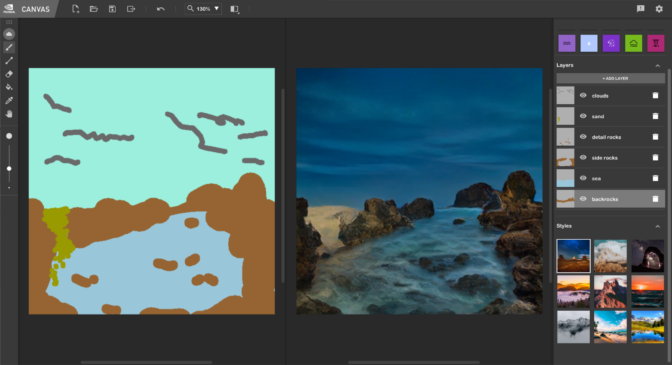

There have been amazing advances in the use of AI in the creative field such as NVIDIA’s Canvas. The new NVIDIA Canvas app uses AI to allow anyone to paint beautiful digital artwork in real time through simple brush strokes.

Even with limited features (more to come soon) Canvas is seriously impressive. Simple shapes and lines are instantly interpreted into real-world visuals, like mountains or rivers. As you draw, the AI renders amazing results in real time.

Canvas also has style filters and applying these allows the user to adopt the style of a particular real life artist. Canvas isn’t just stitching images together or doing something clever with textures. Canvas is creating new images, just as an artist would. Video of it working and beta is available here.

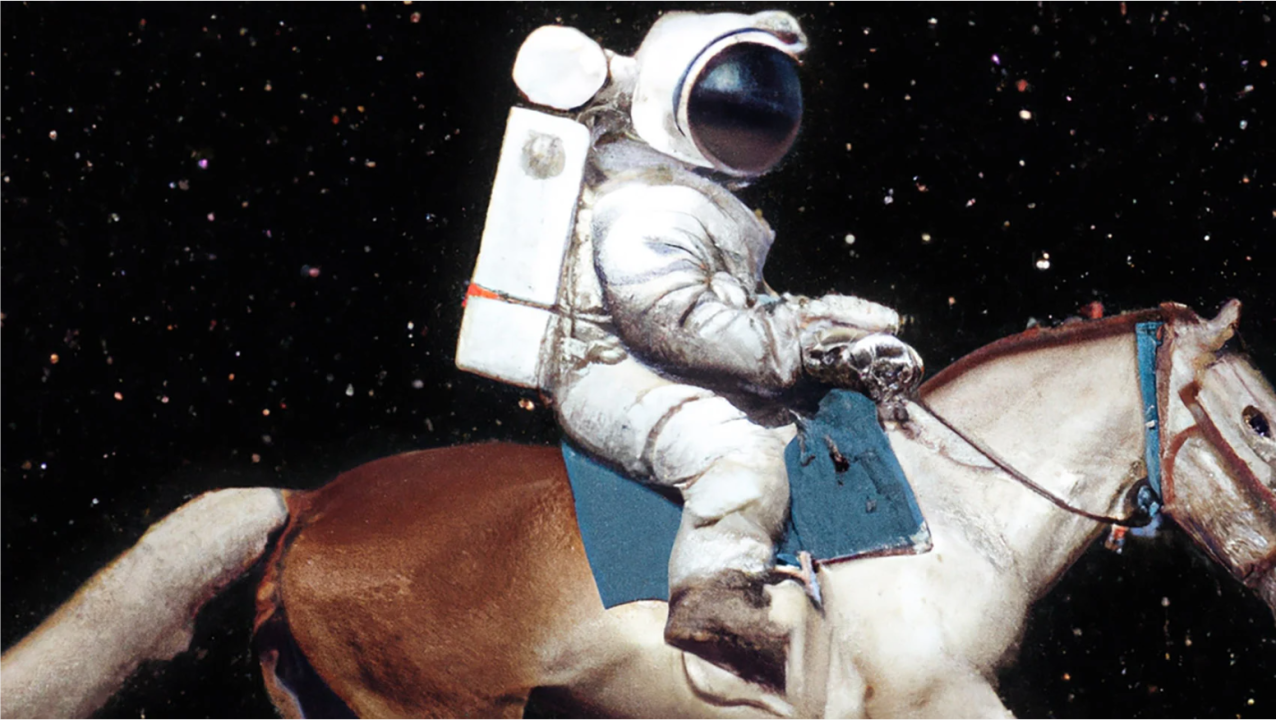

If you think that Canvas is clever, have a peek at DALL-E 2. Going back to what I mentioned before about being able to just say it and then AI will create it, DALL-E 2 uses AI to create realistic digital art using natural language. The folks at DALL-E 2 provide the best explanation of what they are aiming for…

“Our hope is that DALL·E 2 will empower people to express themselves creatively. DALL·E 2 also helps us understand how advanced AI systems see and understand our world, which is critical to our mission of creating AI that benefits humanity.”

“DALL·E 2 has learned the relationship between images and the text used to describe them. It uses a process called “diffusion,” which starts with a pattern of random dots and gradually alters that pattern towards an image when it recognizes specific aspects of that image.”

What DALL-E 2 can do:

– Make realistic edits to existing images from a natural language caption

– Add and remove elements while taking shadows, reflections, and textures into account

– Create original, realistic images and art from a text description

– Combine concepts, attributes and styles

– Take an image and create different variations of it inspired by the original

The image below was produced from the text “a painting of a fox sitting in a field at sunrise in the style of Claude Monet”.

Clearly, the immediate aim of both of these technologies is to enable people. More importantly, in the longer term, their aim is to help prove AI’s wider benefits to humanity.

Personally, I eagerly await the time when we can all create in this way in a virtual environment. My long term view is that when AI and Neural Networks mature, content delivery will be very different. This doesn’t just apply to virtual environments, it will span across every medium. It will be the dynamic and customised delivery of content specifically for you and in the context of your choice.

However, in a virtual environment for example (adding deep fakes and humanlike avatars into the mix), if you want to purchase a car in the future you may choose to have AI create that experience in the style of Bob’s Burgers with Ron Swanson as the salesman that is only allowed to communicate through interpretive dance… coming right up!

#creativitymatters #virtualexperience #immersiveexperience #storytellingforbusiness #AI

Related articles I found interesting:

Will AI target your job next?

https://aifuture.substack.com/p/will-ai-target-your-job-next

Tech’s New Frontier Raises a “Buffet of Unwanted Questions”

Nvidia CEO: The metaverse will be ‘much, much bigger than the physical world’

Free AI tool restores old photos by creating slightly new loved ones