The amount of hype around AI art in my news feed is overwhelming. I have a particular interest in this technology as I feel it is the first step toward the dynamic creation of virtual content, so this is a technology that I will be keeping a close eye on.

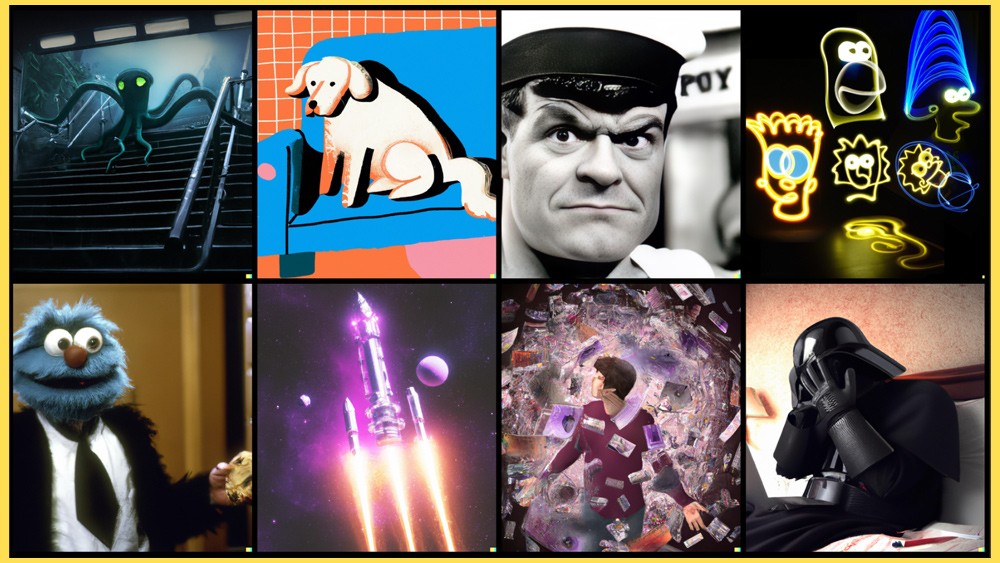

I’ve been experimenting first-hand with DALL-E and Midjourney for the last two weeks. I’m impressed by the capabilities of the technology. I’ve generated over a 1000 images unknowingly as it gets a bit addictive once you start to understand how to communicate with the AI model. It’s worth noting that each system interprets the ‘prompt’ you send it differently. The prompt is the text string that you send the AI in order for it to generate the art. This string not only contains subjects, elements and styles, it can also contain lighting effects, direction and several other variables that provide the AI with some context and composition guidance for what it generates for you.

I’m in several groups related to these technologies and I’ve noticed that a lot of people are struggling with the content and structure of the prompts they choose. When I say struggling, I mean that they are carefully constructing their prompt, anticipating an outcome; however, they receive something completely different.

There is a bit of a learning curve to understanding the relevant commands, weighting and general structure for the composition of AI prompts. When I first started using DALL-E and Midjourney, I would just construct a phrase or a string of subjects and elements with little regard to weighting them or considering perspective and lighting. For the most part, I wasn’t intending to create anything specific. I was more interested in how the AI would interpret what I was sending it. The randomness of the output was the exciting part.

Despite the fun I was having, I quickly realised that there was a way that our team at Hidden could use this technology within our production process.

A significant portion of what we do at Hidden involves bringing a client’s vision to life in a virtual environment. This often involves sketching, storyboarding and trying to convey what is in one person’s mind to a group of people. Sketching and storyboarding are time consuming activities that leave a lot to be desired and trying to read someone’s mind is impossible unless you’re Derren Brown.

In short, I think there are multiple uses for this technology within our processes. For this reason, I’ve organised some experiments with our team that will hopefully better define these uses and allow us to establish some form of theme that works for each.

One concern I had for the experiment was this learning curve that my team would have to overcome to be able to proficiently interact with the AI model. Then, I stumbled upon a couple of very interesting utilities that have been created specifically for overcoming this learning curve.

The first is specific to Midjourney and aptly called ‘Midjourney Prompt Builder‘. This comes in the form of a Google Sheet and it allows you to build a prompt without having to concern yourself with the specific details I mentioned above as they are all listed as options for you. This is a great tool for familiarising yourself with the technical capabilities of the model.

The second is ‘PromptoMANIA‘ and they seem to have a broader ambition of being the online prompt builder for the entire AI art community. This is a web based prompt building user interface for Midjourney, Stable Diffusion and DreamStudio. It also provides an option for creating generic prompts.

Our primary experiments will be based around images that we can produce and build upon as well as how the AI can be used during the development of ideas. I will post the result of the experiments in an article once completed.

As always, we are interested in things that accelerate the development of the metaverse. We feel this is a positive step in a journey toward the generative creation of virtual content.

#metaverse #generativeart #ai #midjourney #stablediffusion #dreamstudio